Welcome to this edition of Loop!

To kick off your week, I’ve rounded-up the most important technology and AI updates that you should know about.

HIGHLIGHTS

AI tools can now unmask anonymous accounts

How Claude discovered 22 security vulnerabilities in the Firefox browser

Science Corp’s implants that can restore vision for the blind

… and much more

Let's jump in!

1. AI tools can now unmask anonymous accounts

We start this week with some worrying research, which suggests that AI is becoming very good at unmasking anonymous accounts - and it's doing it faster and cheaper than anyone expected.

Researchers at ETH Zurich and Anthropic worked on this study together, with a system of AI agents being built by the team. These agents can analyse anonymous posts and were asked to treat them like a puzzle.

For each post, the AI agents scan for any writing quirks, hints about where the user is from, and posting patterns to match the pseudonym account with a real identity. In order to make those links, the researchers gave them access to Reddit posts, LinkedIn profiles, and interview transcripts.

Interestingly, the system was able to identify up to 68% of people with 90% precision. This completely blows traditional methods out of the water, which managed to find almost none.

But the worrying thing here is the economics. It only cost the team $2,000 to run this experiment and it worked out at roughly $1–$4 per profile. Companies already spend similar amounts on advertising, so it’s a feasible option for them to do this and identify any hidden accounts that are writing negative posts.

It also has real implications for journalists, activists, and dissidents who rely on pseudonyms. While the top AI labs can build safeguards to prevent this behaviour, they can’t restrict how people use open-source models and run them locally on their computer.

With this change, you should assume that everything you post can eventually be traced back to you. Unfortunately, the technology is only going to become better at this over time and will be cheaper for companies, or regimes, to use.

2. Anthropic's Claude found 22 vulnerabilities in Firefox

Moving on to a more positive story, Anthropic has partnered with Mozilla and their AI model has found over a dozen security vulnerabilities in FireFox.

Over the course of just two weeks, Claude uncovered 22 vulnerabilities in the browser's codebase - with 14 of those classified as high-severity. Most have already been patched in Firefox 148, which shipped in February, although a handful will need to wait for the next release.

The team started by pointing Claude at Firefox's JavaScript engine, before they broadened its scope further. Since the browser is open-source and one of the most rigorously tested codebases in the world, it was a great project to test Claude on.

Anthropic found that Claude was very good at spotting flaws, but far less capable when it came to actually exploiting them. The company spent around $4,000 to try and exploit these security vulnerabilities, but only managed to succeed in two cases.

It's an important finding, as it suggests that these tools are better suited to defensive security work than actually carrying out the attacks.

3. AI startup admits it lied about revenue numbers

The CEO of Cluely, Roy Lee, has admitted that the $7 million annual recurring revenue figure he shared last summer was fabricated.

Lee posted his confession on X, calling it "the only blatantly dishonest thing" he'd said publicly - although his version of events doesn't quite hold up either.

He claimed the interview was a surprise cold call, but TechCrunch’s reporter has shared an email that shows Cluely's PR representative arranged the conversation, shared Lee's number, and confirmed he was expecting it.

If you haven’t heard of Cluely before, the company became famous for helping people to cheat at job interviews and use AI without being detected. Lee and his co-founder built it after he was suspended from Columbia University.

The company raised $5.3 million in seed funding, before it landed a $15 million Series A from Andreessen Horowitz last June. Its growth strategy has leaned heavily on viral stunts, which has worked well so far.

Personally, I’m not surprised the “cheat at everything” guy has lied about his numbers. But it’s a useful reminder that the industry is full of hype right now and we need to be somewhat skeptical about what we read online.

4. AWS launches a new AI agent platform specifically for healthcare

With the huge growth being seen in AI-enabled healthcare, AWS has announced a new platform that can manage AI agents for the sector. The new Connect Health platform is HIPAA compliant and it already works with the software that hospitals use to store patient records.

AWS has focused on the admin tasks that clinicians typically have to deal with - from scheduling appointments, to writing documents, or converting a diagnosis into the correct medical code.

While it’s not Amazon’s first product for healthcare, it is the first one that brings AI agents into the sector and ensures that they comply with the US’ regulations. It’s clear that AWS is keen to get a bigger slice of America's $5 trillion healthcare industry.

It’s also worth noting that Amazon has recently spent $5 billion to acquire PillPack and One Medical, which could allow the company to deliver prescriptions and organise virtual GP appointments.

Of course, Amazon isn’t alone here. Google has seen great success with its dedicated AI model for medicine, known as MedGemma. While OpenAI and Anthropic have also announced their own healthcare products in recent weeks.

5. AI-generated art can’t be copyrighted in the US

The US Supreme Court has refused to get involved and a previous ruling still stands, ensuring that AI-generated art cannot be copyrighted. The case was brought by Stephen Thaler, a computer scientist who has spent years fighting for this protection.

Back in 2019, Thaler asked the US Copyright Office to register an image that was created by his algorithm, but he was refused on the grounds that it lacked "human authorship." Since then, that decision has been upheld at every level of the US judiciary.

It follows guidance from the US Copyright Office, which confirmed that AI artwork falls outside copyright protection. The US Patent Office has also decided that AI systems should not be named as inventors.

Of course, you can still use AI tools to help with the process and create innovative ideas - but you can’t name the AI itself as the inventor.

The UK Supreme Court has also reached a similar decision, as the law states that only humans or companies can be creators, and it’s likely that other Western nations will reach this consensus.

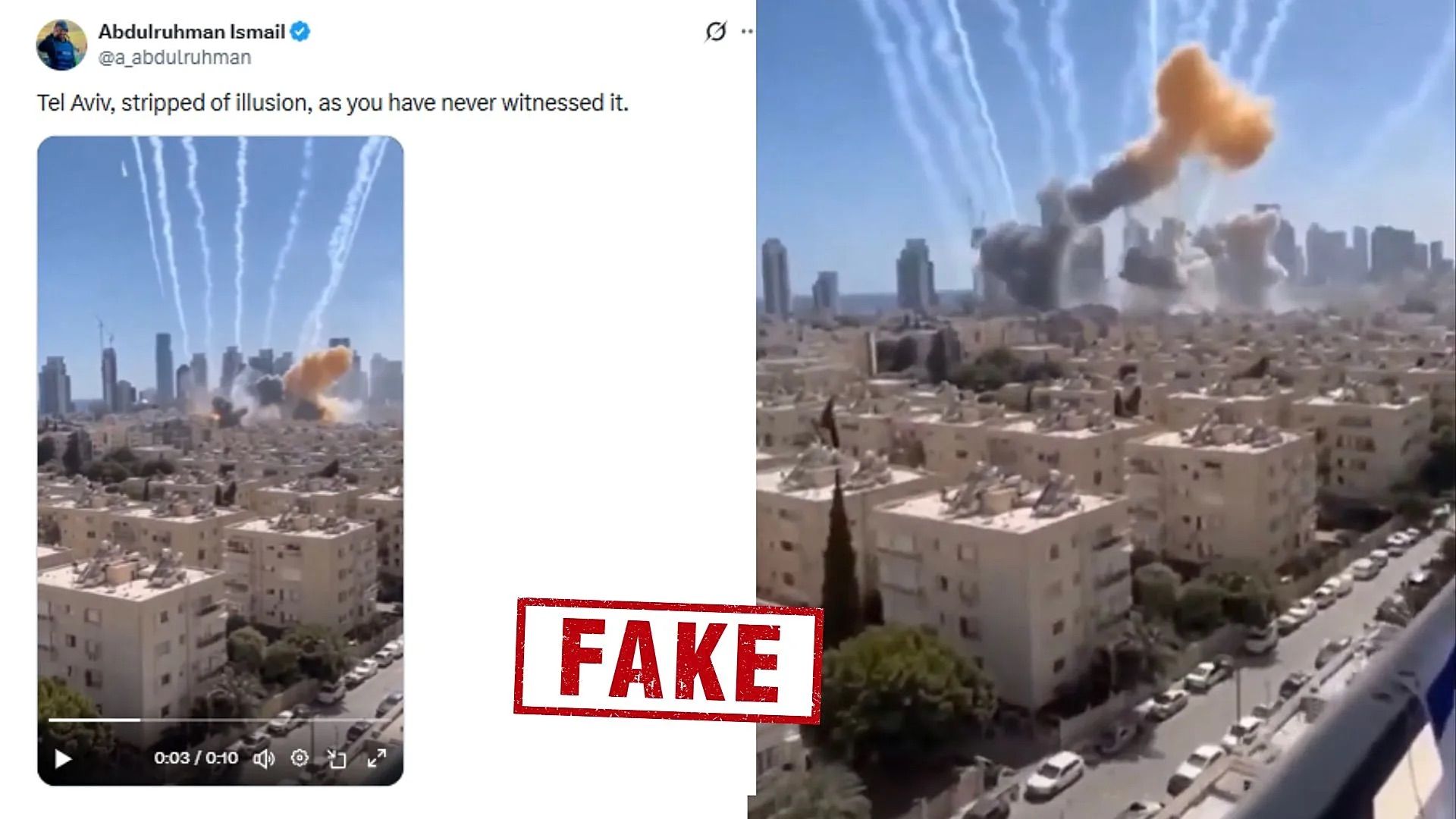

How to spot real content in the era of deepfakes

Following the US war against Iran, we have seen a flood of fake videos and images online - with some claiming to show fighter jets dodging missiles. However, these are often old clips, AI-generated videos, or have been taken directly from video games.

It's a pattern that plays out with every major conflict now, and it's only getting worse. To tackle this, news organisations - like The New York Times and BBC - have setup their own dedicated teams to verify if information is real.

There are a lot of techniques that they can use to check images and video clips. For example, they can run a reverse image search and try to find earlier versions of that image. Google’s own tool is incredibly useful for this, as it can spot when the image or video was first uploaded to the web.

These news companies are also partnering with tech startups, such as Reality Defender, to check if the image is a deepfake or was generated with an AI model. These platforms are able to spot subtle mistakes in the image, like mismatching ear rings or unnatural skin tones.

They’re incredibly powerful and, in my experience, they work very well. However, they are quite expensive to run - so these companies will partner with media companies and offer them discounted prices.

With the huge advances in image and video generators, it’s a constant arms race to develop technology that can identify these fake images and prevent misinformation from being spread online.

🛡️ OpenAI reveals an AI agent that can scan code for security vulnerabilities

🕶️ Samsung plans to launch its first smart glasses this year

⚛️ Bill Gates’ TerraPower gets approval to build new nuclear reactor

📈 Claude sees rapid consumer growth, following clash with the Pentagon

🔞 Indonesia and India's Karnataka plan to ban social media for under-16s

🎬 Luma launches AI agents for the creative industry and marketing

🎙️ Claude Code rolls out voice mode

🚀 OpenAI releases GPT-5.4 with Pro and Thinking versions

📚 AI translations are adding hallucinated sources to Wikipedia

🧑💻 OpenAI is developing a rival to Microsoft’s GitHub

📹 Google Home can now answer questions about your live camera feed, like “Is Liam wearing a bicycle helmet?”

💡 Nvidia’s investing $4 billion on photonics to stay ahead of the curve in AI

💻 New MacBook Pro are up to $400 more expensive, thanks to the RAM shortage

🎲 Polymarket saw $529 million traded on Iran war bets

Science Corp

This startup was founded by Neuralink’s former president and has raised $230 million to improve its brain implant technology. Following the new fundraising, Science Corp is now valued at $1.5 billion.

The company's near-term bet is PRIMA - a tiny chip that’s inserted in the eye and can pair with smart-glasses. Ultimately, the team wants to use this technology and help people with advanced macular degeneration to restore their vision.

If this sounds familiar, it’s the same company that I covered back in October and their breakthrough technology has allowed blind patients to read again.

The clinical results look really impressive. Across 47 blind patients in Europe and the US, 80% saw improvements with their vision - allowing them to read letters, numbers, and words.

On the regulatory front, Science Corp has applied for the EU’s CE mark and expects to get approval this summer - with Germany likely serving as its first market.

If that timeline holds up, it would beat every other BCI company and have an actual product that’s available to patients. It would be a huge milestone, as the sector has long promised what’s possible but struggled to make it a reality.

Going beyond vision, the company is pursuing some really ambitious projects. They’re now developing a device that can be placed on the brain’s surface, and are investigating how we can better preserve organs ahead of surgery.

With this new round of funding, the company has what it needs to invest this research and bring their first products to market. IQT, an investment arm for the US intelligence agencies, has come on board as a notable investor.

It’s clear that the US intelligence community sees serious potential in this technology, as brain-computer interfaces could allow soldiers to make faster decisions and Science Corp’s work on organ preservation would be incredibly useful for American field hospitals.

If you want to learn more about the company, I’ve included a link to it below.

This Week’s Art

Loop via OpenAI’s image generator

We’ve covered quite a bit this week, including:

AI tools that can now unmask anonymous accounts

How Claude discovered 22 security vulnerabilities in Firefox's codebase

Why Cluely's CEO has admitted to fabricating his startup's revenue numbers - and what it says about the industry's hype problem

AWS's decision to launch a new AI agent platform built specifically for healthcare

Why AI-generated art still can't be copyrighted in the US, after the Supreme Court refused to intervene

How news organisations are fighting back against deepfakes

And Science Corp’s implants that can restore vision for the blind

If you found something interesting in this week’s edition, please feel free to share this newsletter with your colleagues.

Or if you’re interested in chatting with me about the above, simply reply to this email and I’ll get back to you.

Liam

Feedback

How did we do this week?

Share with Others

If you found something interesting in this week’s edition, feel free to share this newsletter with your colleagues.

About the Author

Liam McCormick is a Senior AI Engineer and works within Kainos' Innovation team. He identifies business value in emerging technologies, implements them, and then shares these insights with others.