You’re receiving this email because you subscribed to the newsletter. If you’d like to unsubscribe, you can manage your preferences here with one click (or use the button at the bottom of the email).

Welcome to this edition of Loop!

To kick off your week, I’ve rounded-up the most important technology and AI updates that you should know about.

HIGHLIGHTS

OpenAI finally releases GPT-5

Why NASA is planning to build a nuclear reactor on the moon

How DeepMind is bringing us closer to AI-generated games

… and much more

Let's jump in!

1. Vogue receives backlash for featuring an AI-generated model

We start this week with Vogue, which has faced controversy over an advert that includes AI-generated models. The Guess ad has raised questions about the profession’s future and whether “AI models” will be used to promote unrealistic beauty standards.

Part of this is due to marketing, which has worked well for Guess, but it’s also down to simple economics. The world has changed dramatically in the last two decades, with the explosion of social media and the constant need to generate digital content.

Brands need thousands of content pieces for social media and e-commerce, not the four big campaigns they used to produce annually. That can take a lot of time and, crucially money, to organise.

Whereas, these digital avatars don’t need flights or hotels. Plus, they can wear an infinite number of outfits in any location.

The technology is still quite primitive and requires a lot of work to perfect the results, so it’s not as simple as some people suggest. There’s also the possibility that the product will look different in an AI ad compared to real-life.

That could lead to a lot of angry customers, especially when they’re spending a lot of money on the item. Some companies are also betting that authenticity is a competitive edge over their rivals, which is core to a brand’s success on growing platforms like TikTok.

For these reasons, I don’t expect many companies to start using the technology - besides the occasional ad. But the technology is advancing fast, as shown by recent progress by Google and Alibaba. In five years time, that might change completely.

2. Anthropic releases Claude Opus 4.1 as their flagship model

You might have missed Anthropic’s announcement of Opus 4.1, following the huge media coverage of OpenAI’s GPT-5 model (which I cover further down).

The company has pitched it as an incremental upgrade, with the promise of "substantially larger improvements” in the coming weeks.

Opus is their flagship model and often excels at difficult tasks, such as reasoning, writing and understanding huge code files. As a result, it uses a lot of compute and is quite costly to run - but it’s by far the best LLM out there.

You should already have access to the model, especially if you’re a paid user, so go and test it out for yourself. It’ll be interesting to see what else Anthropic has planned for the coming weeks.

3. NASA accelerates plans to build a nuclear reactor on the moon

Following plans by China-Russia to develop a nuclear reactor on the moon, the US has reacted and promises to do it 5 years earlier than their rivals.

The space agency's new interim administrator, Sean Duffy, has directed teams to prepare a 100-kilowatt nuclear reactor for lunar deployment by 2030 – more than double the power output originally planned.

It's a bold timeline, especially considering NASA's previous Fission Surface Power Project targeted a more modest 40 kilowatts.

The urgency stems from mounting competition with China and Russia, who've announced their own plans for a lunar nuclear reactor by 2035.

Duffy's directive explicitly frames this as a race, warning that whoever deploys first could potentially establish "keep-out zones" that might hamper rival missions.

Unlike solar panels, reactors can run continuously through the moon's fortnight-long nights, powering life support and communications without interruption.

This reliability becomes crucial for exploring permanently shadowed regions, where scientists suspect water ice exists. Weirdly, this is almost identical to the plot in “For All Mankind”. Maybe it wasn’t so far-fetched after all.

4. AI coding startups are toast, despite rapid growth in revenues

The AI coding assistant gold rush might have a fundamental problem: the economics don't work. A good example of this is Windsurf.

The startup was gaining traction with software developers and was aiming to raise $2.85 billion in February, but they completely changed course just a few months later.

By April, they agreed to sell the company to OpenAI for $3 billion instead. This deal eventually fell through, with Google securing the top talent and the remaining business sold to Cognition.

But why would a fast-growing company, with revenues rising to over $82 million in a few months, want to suddenly exit? Simply put, the business was bleeding money.

AI coding tools face "very negative" gross margins, meaning they lose money on every customer. This is due to their reliance on language models, which cost eye-watering sums of money to run.

Even Anysphere's Cursor, despite hitting $500 million in annual recurring revenue, isn't immune. They've started passing costs on to power users and are desperately trying to build their own models to escape the margin squeeze.

The industry is hoping that inference costs will plummet over time, but that isn’t guaranteed. Windsurf is a clear warning for the wider AI industry.

As companies invest billions in the technology and create new reasoning models that cost even more to run, they may not see a return for many years. With the inflated valuations we see in the stock market, that optimism won’t last forever.

It seems that the only players who can absorb these costs are the big tech companies, not smaller startups like Windsurf.

5. Voice phishing attack was used to steal customer info from Cisco

Cisco has fallen victim to a sophisticated voice phishing attack, which has allowed the bad actor to steal customer information.

Essentially, the cybercriminal used an AI-model to replicate a person’s voice. They then convinced Cisco staff to let them access the company’s Salesforce data.

The stolen data includes customer names, organisation details, addresses, email addresses, phone numbers, and various account metadata - including creation dates.

What's particularly telling is what Cisco isn't saying. The company has remained tight-lipped about the scale of the breach, with their spokesperson declining to specify how many users were affected.

The incident appears to be part of a broader campaign targeting Salesforce customers. Allianz Life, Tiffany & Co., and Qantas have already suffered similar attacks.

Unfortunately for these companies, voice phishing is tricky to prevent. Most companies spend millions on training for their employees, but they’re not always effective and are (let’s face it) quite boring.

Luckily, there are more tools available to detect these voice clones - but they’re not cheap to run and not feasible for every single phone call. The only solution is to better utilise multi-factor authentication and verify the person’s identity in several ways - not just one.

OpenAI’s GPT-5 is finally here, but it fails to meet the hype

OpenAI has unveiled GPT-5, marking what the company calls a significant leap towards artificial general intelligence. The model represents OpenAI's first "unified" system, blending the reasoning capabilities of its o-series models with the rapid response times from GPT-4o.

In summary, OpenAI is promising that the model is more accurate (has dramatically fewer hallucinations), can pick the best model option for your task (reasoning vs non-reasoning variants), and is better at coding than ever before.

From my own testing, the model definitely seems faster than before. The results seem better, but I’ll need to spend more time with it.

The “routing” feature, which allows the model to automatically select the best option for you, sounds good in theory but wasn’t useful for me. Instead, I became frustrated when the model didn’t select the “longer thinking” option and just did it myself.

Plenty of people online have gave their views on the model. Many are furious that OpenAI has removed access to their older models, with only GPT-5 available for paid users.

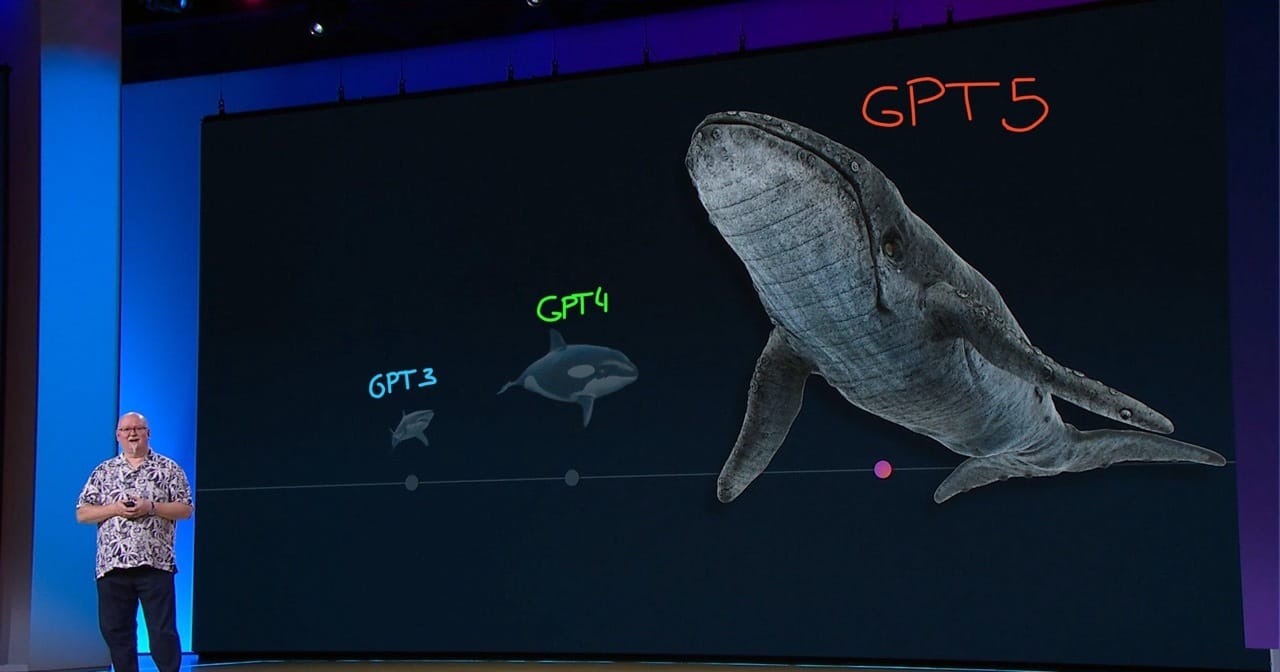

However, I’m a bit disappointed that the model hasn’t met the expectations set by OpenAI and Microsoft. A year ago, Microsoft’s CTO Kevin Scott showed an infamous graphic that showed GPT-5 would be around 10x bigger and more powerful than GPT-4.

Did that happen? No, it didn’t. Similarly, OpenAI’s Sam Altman has claimed that GPT-5 has “PhD-level intelligence”. Is that true? No, it’s not. The model still regularly gets simple questions wrong and makes very basic mistakes.

When GPT-5 became available to users at the weekend, that gulf between hype and reality quickly came into focus. Many complained online and said that it was a “downgrade” at certain tasks. Some even asked OpenAI to allow access again to their older models.

Unfortunately for OpenAI, their launch of GPT-5 has fallen flat and upset plenty of their user base - but we’ll need to spend more time with the model and properly evaluate what it’s able to do.

For years, GPT-5 was pitched as a significant improvement and executives promised that it would move the industry forward. Thousands of users have disagreed and they instead see it as an incremental improvement, not a fundamental shift.

While OpenAI can clearly win that trust back, the launch of GPT-5 has highlighted the risk in overhyping your products and promising too much.

DeepMind brings us closer to AI-generated games

Google DeepMind has unveiled Genie 3, a foundation world model that could change how we train AI systems - especially robotics and autonomous vehicles.

Unlike its predecessors, this isn't just another environment generator. DeepMind calls it the first "real-time” world model.

Genie 3 can create an interactive 3D world and show it at an impressive 720p resolution. The world itself can run for several minutes, which is a significant improvement over previous versions.

With the older Genie 2, it could only run these interactive worlds for 10-20 seconds.

But what’s interesting is that Genie 3 has been able to understand physics on its own, without any help from Google’s researchers. This includes learning how objects fall, collide, and interact with each other.

This auto-regressive approach - generating one frame at a time whilst looking back at previous ones - gives the model an almost human-like grasp of cause and effect.

DeepMind has since tested this with their SIMA agent, which is able to successfully navigate Genie’s warehouse environments and complete specific tasks.

All the top tech companies - such as Microsoft, Meta, and Google - are actively researching how AI-generated images can be used to train simple robots.

For example, they’ve used GenAI to create thousands of images that show objects on a desk. These were then used to train a robotic arm and allow it to move those objects around a room in real-life.

With advances like Genie 3, where we can create realistic worlds using simple text, this could lead to breakthroughs in robotics and allow them to gain new skills.

It could also be used to create short games in a few hours, with the AI model able to simulate an infinite number of worlds - from almost any perspective.

💰 Tesla hands a $29 billion pay package to Elon Musk

✈️ Joby Aviation buys a rideshare business for $125 million

🚕 Lyft and China's Baidu will bring robotaxis to Europe next year

🍎 Apple announces another $100 billion to boost US manufacturing

🕸️ Perplexity accused of scraping websites that blocked AI scraping

🎵 ElevenLabs launches an AI music generator, approved for commercial use

🔒 Cohere's new AI agent platform will keep your enterprise data secure

🚗 You can now buy a used car on Amazon (if you live in LA)

⚡ Mercedes-Benz plans a flurry of new EVs

🛡️ Microsoft develops an AI agent that can detect malware

💥 US military plans to shoot missiles at Tesla's Cybertruck

Tavily

For several months now, I’ve been building Tavily’s tools into my AI agents at work. I’m actually surprised that I haven’t covered them in this newsletter before.

The startup offers an easy way for developers to add web search to their software. For example, I’ve created AI agents that can quickly research rival companies and create in-depth reports.

To do this, I used Tavily’s search tool and gave the agent access to it. The agent then decides when to use the web search and what the query should be. It’s an affordable way to do this, as you get $8 credit for free, every month.

The company has just secured $25 million in funding, as they hope to invest more in their product line-up and attract more enterprise customers.

Tavily now provides several companies - including Groq, MongoDB, and Writer - with tools that allow their agents to search both public and private data sources.

Their nearest competitor is Exa, which offers a very similar product, but has more control over the data sources you can search through - such as LinkedIn.

Both are great products and can work for slightly different use cases, but I’ve generally found that Tavily’s results are better - especially when you enable advanced search.

If you want to learn more about Tavily, you can check out the link below.

This Week’s Art

Loop via OpenAI’s image generator

We’ve covered quite a bit this week, including:

The backlash against Vogue’s AI-generated model

Anthropic’s update to Claude Opus 4.1

Why NASA is planning to build a nuclear reactor on the moon

How AI coding startups are toast, despite rapid growth in revenues

The voice phishing attack that stole customer info from Cisco

OpenAI finally reveals GPT-5, but it fails to meet the hype

How DeepMind is bringing us closer to AI-generated games

And how Tavily is connecting AI agents to the web

If you found something interesting in this week’s edition, please feel free to share this newsletter with your colleagues.

Or if you’re interested in chatting with me about the above, simply reply to this email and I’ll get back to you.

Have a good week!

Liam

Feedback

How did we do this week?

Share with Others

If you found something interesting in this week’s edition, feel free to share this newsletter with your colleagues.

About the Author

Liam McCormick is a Senior AI Engineer and works within Kainos' Innovation team. He identifies business value in emerging technologies, implements them, and then shares these insights with others.