Welcome to this edition of Loop!

To kick off your week, I’ve rounded-up the most important technology and AI updates that you should know about.

HIGHLIGHTS

How AI-powered satellites are transforming the way we gather intelligence from space

Microsoft's Copilot bug that exposed confidential emails

Google's AI model that can now generate music

… and much more

Let's jump in!

1. ByteDance gives in to a frightened Hollywood

We start this week with Hollywood, as movie executives are becoming increasingly worried about AI and how it will impact their Intellectual Property. ByteDance is facing significant legal pressure from Hollywood, following the release of a new AI video generator that can produce incredibly realistic clips.

Thousands of people are now using the technology and featuring real actors - such as Tom Cruise, Brad Pitt, and Tom Hanks - in their short films. You can also include characters from popular TV shows, like Dragon Ball Z, Family Guy, and Pokémon - angering movie executives who see this as IP theft.

Disney has sent a cease and desist letter accusing ByteDance of "hijacking" its protected characters, while Paramount Skydance followed suit and demanded that the company removes any infringing content.

As I mentioned last week, the Motion Picture Association called it copyright infringement at a "massive scale," and SAG-AFTRA has said that the tool is a threat to the actors' livelihoods.

Following the outcry, ByteDance has agreed to place more restrictions on the AI model and characters that are generated. But the genie is out of the bottle now, as these models are advancing faster than the guardrails around them.

ByteDance can add more restrictions, but users will always find a way around them. Instead of saying “Homer Simpson” in their prompt, they can describe a yellow cartoon character that works in a nuclear power plant.

Or they can use open-source models and avoid these restrictions entirely. Instead of threatening legal action against tech companies, Hollywood needs to come to terms with this new technology and embrace it - like they did with iTunes and online downloads.

If they don’t, the tech will continue to progress without them.

2. AI satellites are changing how governments gather intelligence

For years, I have been flagging this as an area with huge potential. We’ve seen huge advances in AI models and how they analyse images, which allows both governments and private companies to combine it with satellite imagery and gather intelligence much faster.

This is useful for governments, as they can monitor battlefields around the world and detect when a hostile state is building new infrastructure. It’s also useful for private companies, who are interested in what their competitors are doing and can use these insights to make investment decisions.

Vantor (formerly known as Maxar) has struck a deal with Google’s Earth AI models into its Tensorglobe platform - and it's a significant move for the geospatial intelligence space.

The partnership makes Vantor the first satellite company to deploy Google's Earth AI models in classified and air-gapped government environments, where the most advanced AI tools simply can't operate.

These new AI models are able to identify buildings, assess the damage that’s been caused by a recent storm, and can analyse the wider area for insights. This will prove incredibly powerful for government analysts, as they can automate part of this process and gather intelligence on enemy targets much faster than before.

Interestingly, Vantor can also fine-tune these models and use the customer's own sovereign data - allowing them to detect even more objects from space.

It's worth watching how this develops. I’ve been following this area for a very long time and I see a lot of potential here for gathering intelligence - whether it’s for governments or the private sector.

3. OpenClaw founder Peter Steinberger is joining OpenAI

OpenAI has hired Peter Steinberger, the creator of OpenClaw, just a few weeks after the AI agent went viral. According to Sam Altman, the developer will join OpenAI’s product team and help guide their decisions on multi-agent systems.

If you haven’t heard of multi-agent systems before, these are essentially bots that can communicate with each other and autonomously solve tasks.

Steinberger’s project (which went through the names Moltbot and Clawdbot before settling on its current branding) quickly became one of the most talked-about AI agent platforms in the industry.

That said, the journey hasn't been entirely smooth - security researchers have recently discovered more than 400 malicious skills on ClawHub and raised concerns over the risks they pose.

While the financial details remain under wraps, Sam Altman did confirm that OpenClaw will carry on as an open-source project and will be supported by OpenAI.

4. Microsoft exposes confidential emails with its Copilot AI

Microsoft has found itself in hot water after admitting that its AI assistant, Copilot Chat, was mistakenly sharing confidential emails with users - affecting private companies that have enabled the chatbot.

The bug meant that the tool - which has been pitched to businesses as a secure AI chatbot - was pulling content from their “drafts” and “sent” folders in Outlook. It even happened when these emails were labelled as confidential and had data policies setup to lock down access.

Microsoft insists that nobody has gained access to information they weren't already authorised to see, and a fix has since been rolled out. But the optics aren't great here.

The issue has also affected the NHS in England, which stores data on millions of patients. Thankfully, no patient data has been compromised in this instance - but it raises serious questions about what data we should allow these chatbots to access.

In order to take advantage of these AI tools, companies have to grant them access to their most sensitive data. But this also elevates the risks, as one mistake can expose all of that data to people that should never see it.

Microsoft understands this concern and has built a huge reputation for AI security, so this is quite an embarrassing mistake for them to make. With more companies uploading their confidential data to these tools, we should expect a lot more of these stories in future.

5. Google’s Gemini can now create music for you

With Google’s model, you can ask Gemini to use AI and generate music tracks. These can be up to 30 seconds long and you can upload text, images, or even video.

To get started, you can describe a genre, a mood, or a memory, and Gemini will create the track in a few seconds - along with lyrics and cover art. Or you can upload photos from a holiday and it'll try to match the vibe musically.

The tool is now available in the Gemini app, but you have to be over-18 to use it. Lyria 3 is also heading to YouTube's Dream Track, letting creators generate AI soundtracks for Shorts.

The AI model can’t clone specific artists, as Google is worried about the impact it would have on copyrighted music. Instead, the model can produce tracks that share a "similar style or mood".

Whether that's enough to satisfy the music industry remains to be seen. They could also launch a campaign against the tool, similar to Hollywood’s action against ByteDance, if they believe it’s overstepping the mark and copying their work.

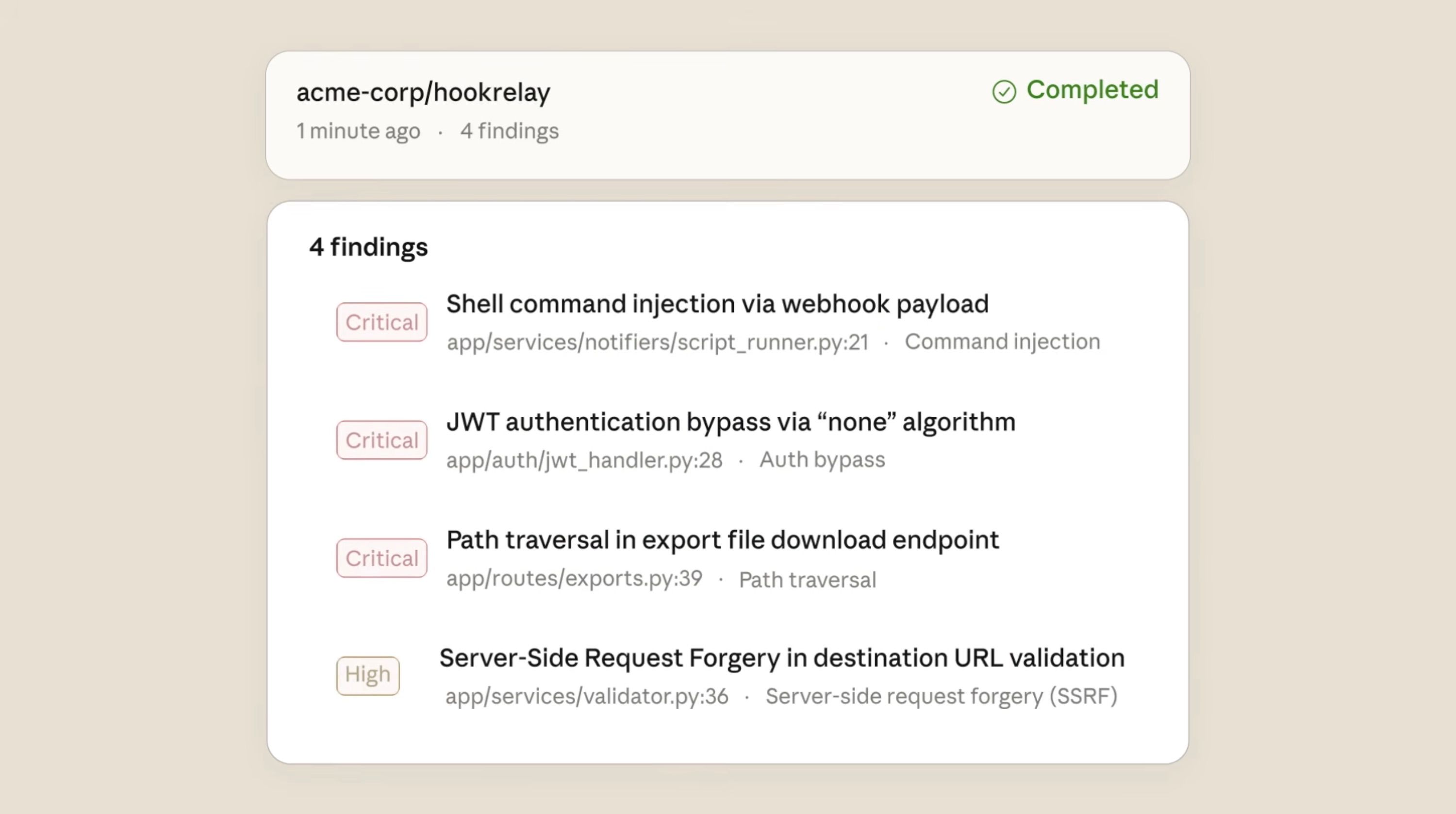

Anthropic’s AI agent can spot security vulnerabilities

Anthropic has launched Claude Code Security, a new tool that can scan codebases for vulnerabilities and suggest patches - essentially giving security teams access to an AI-powered researcher.

The tool works differently from traditional static analysis, which focuses on matching the code against known vulnerabilities and patterns.

Instead, Anthropic’s agent can review code the same way a human security researcher would - by tracing the data as it passes through the system and flagging the subtle bugs that rule-based scanners can miss.

Every detection will go through a verification process, where Claude essentially tries to prove itself wrong before it flags the issue to a human analyst. These results are then shown with different severity ratings and confidence scores, but no action is taken without a human checking it first.

Using Claude Opus 4.6, Anthropic's team has discovered over 500 vulnerabilities in open-source codebases. That's a striking number, and it highlights just how effective AI has become at finding vulnerabilities - which can be missed by humans.

Of course, it’s a double-edged sword. AI can help defenders, but it also allows the attackers to exploit weaknesses faster than ever. It's still very early days, but if the results hold up, this could really change how organisations approach cybersecurity.

Anthropic is making this tool available to a select number of organisations, after you apply for access and are approved. If you want to access the new tool, I’ve included a link below.

🌍 World Labs raises $1 billion to fund their work on world models, Autodesk invests $200 million

🤖 Meta patents an AI that can keep posting after you die (Black Mirror anyone?)

💾 Memory exec warns that skyrocketing RAM prices could kill products and entire companies

💰 Meta agrees to buy millions of AI chips from Nvidia

🖥️ Anthropic's new Sonnet 4.6 model is better at using computers

✍️ WordPress' AI assistant will let you edit your website with prompts

🏆 Google's new Gemini Pro model sets record benchmark scores, yet again

🤖 Toyota will use seven humanoid robots at its Canadian factory

🚕 New York pauses plan to expand the testing of robotaxis

🗳️ Meta will spend $65 million on elections and try to limit future AI legislation (NYT)

💵 OpenAI is finalising a $100 billion deal, valued at more than $850 billion

🌐 Cohere launches its family of open multilingual models

Freeform

This startup wants to scale 3D printing and rival traditional manufacturing - using hundreds of lasers and real-time AI to do it.

The company was founded in 2018, after their CEO became frustrated with the slow machines that SpaceX used to print metal engine parts. Erik Palitsch then started from scratch, with a new system designed to use 18 lasers and fuse metal powders into precision components.

But the real ambition lies in its new system, which would scale that up to hundreds of lasers. These would be able to churn out thousands of kilograms of parts, every single day.

To achieve this, the company has raised $67 million in Series B funding - with Founders Fund and Nvidia joining as investors.

But what makes Freeform particularly interesting is its "AI native" approach.

The company runs physics simulations in real-time, with Nvidia H200 GPU clusters being housed in its own data centre - a setup that’s incredibly rare for a manufacturing outfit.

That data flywheel is already paying off, with Freeform claiming to hold more meaningful data on metal-printing physics than anyone else in the sector.

The company is now shipping hundreds of mission-critical parts every week, with plans to hire around 100 staff and tackle their growing backlog. It's a bet that manufacturing can move at the speed of software - and investors are clearly onboard.

This Week’s Art

Loop via OpenAI’s image generator

We’ve covered quite a bit this week, including:

Why ByteDance's decision to restrict its AI video generator won't be enough to satisfy Hollywood's growing concerns over IP theft

How AI-powered satellites are transforming the way governments and private companies gather intelligence from space

Why OpenAI's hire of OpenClaw creator Peter Steinberger signals a bigger push into multi-agent systems

Microsoft's Copilot bug that exposed confidential emails - and what it means for companies trusting AI with their data

Google's AI model that can now generate music tracks from text, images, and video

Anthropic's new security tool that can find the vulnerabilities that traditional scanners miss

And how Freeform is using hundreds of lasers and real-time AI to scale 3D printing

If you found something interesting in this week’s edition, please feel free to share this newsletter with your colleagues.

Or if you’re interested in chatting with me about the above, simply reply to this email and I’ll get back to you.

Liam

Feedback

How did we do this week?

Share with Others

If you found something interesting in this week’s edition, feel free to share this newsletter with your colleagues.

About the Author

Liam McCormick is a Senior AI Engineer and works within Kainos' Innovation team. He identifies business value in emerging technologies, implements them, and then shares these insights with others.